How to Set Up Effective Alert Routing Without Breaking the Bank

At 3 AM, your monitoring system sends an alert. The database connection pool is exhausted, response times are increasing, and customers are starting to notice. The alert goes to a Slack channel visible to forty engineers, but no one responds, assuming someone else will take care of it.

This alert routing issue costs teams more than they realize, leading to downtime, alert fatigue, burned-out on-call engineers, and a gradual loss of trust in your monitoring system.

The good news is that effective alert routing doesn’t require a big budget. It needs clear thinking, intentional design, and the commitment to test what you’ve created.

What Is Alert Routing?

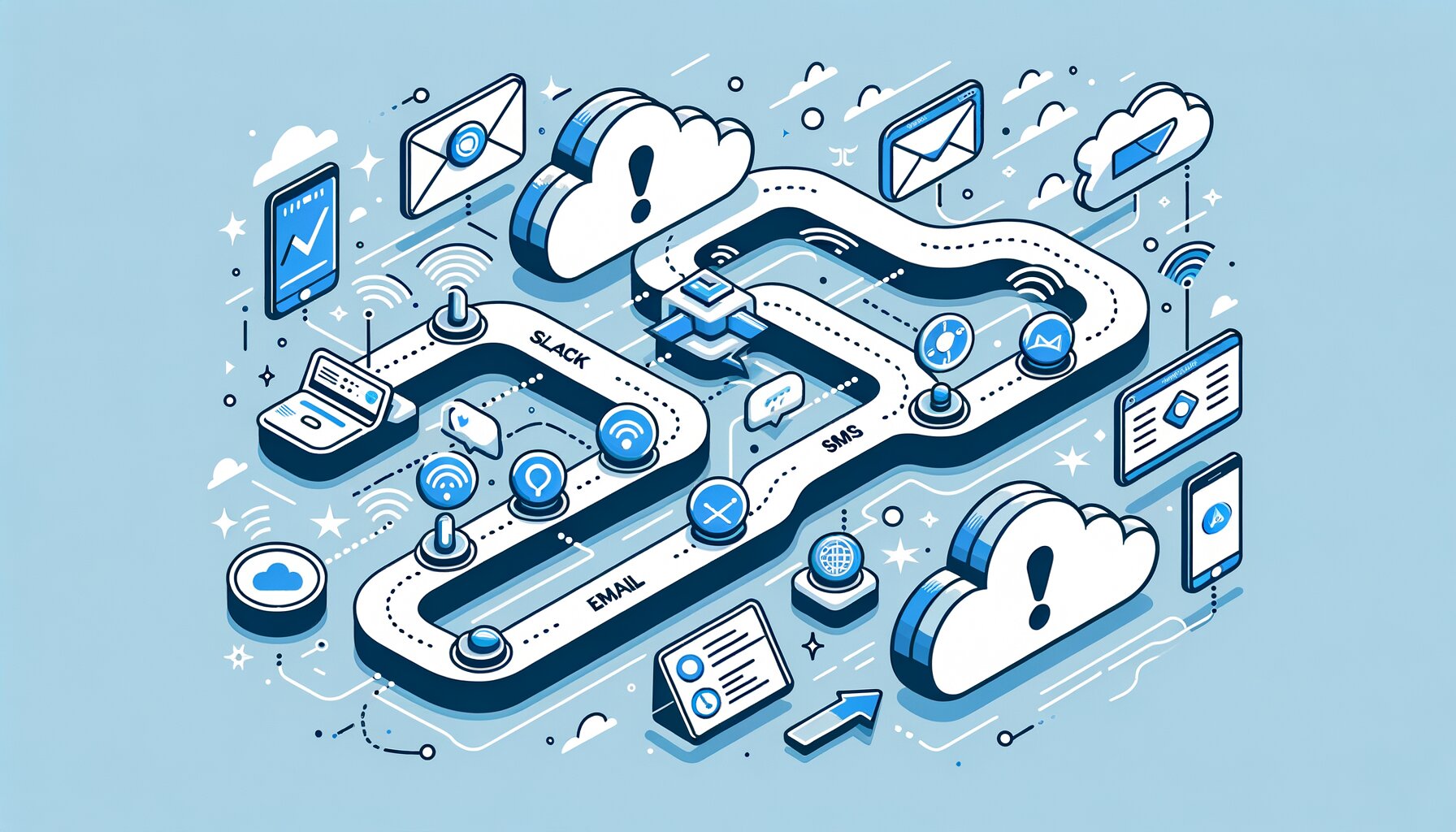

Alert routing directs notifications from a monitoring system to the right person, through the right channel, at the right time. It connects your observability tools — like Prometheus, Datadog, and CloudWatch — to the people who need to respond.

In essence, alert routing answers three questions:

- Who needs to know?

- How should they be notified?

- What happens if they don’t respond?

Webhook alert routing connects monitoring tools to a routing layer using HTTP callbacks. Your monitoring tool sends a webhook, the routing system evaluates it, and then sends notifications to the appropriate recipients. The details of this process are crucial.

The Three Principles of Good Alert Routing

Before making any changes, internalize these principles to avoid creating a system that looks advanced but falters under real pressure.

Right Person

Not every alert should reach all engineers. A frontend performance issue doesn’t need to wake the database team, and a payment processing failure shouldn’t go to the intern who just started.

Align alerts with service ownership. If your team has clear service ownership, your routing rules should mirror that. The on-call engineer for the payments service gets payment alerts, while the platform team handles infrastructure alerts. Although this seems obvious, many teams still dump all alerts into one channel, hoping for the best.

Right Channel

The severity of an alert determines the channel. A warning about rising disk usage belongs in Slack or email, while a complete service outage should be communicated via phone call or SMS. Multi-channel notifications should match urgency with the appropriate medium.

Consider the receiver’s perspective. If every alert comes as a phone call, engineers might ignore their phones. If critical alerts only appear in Slack, they could get lost among GIF reactions and thread replies. The choice of channel is a key part of the communication.

Right Time

Timing involves two aspects: when to escalate and when to suppress. If the primary on-call engineer doesn’t acknowledge an alert within ten minutes, it should escalate. If the same alert triggers multiple times in an hour, the subsequent notifications should be grouped or suppressed.

An effective alert escalation policy outlines these timing rules so that people don’t have to make judgments at 3 AM. The system manages the decision-making, while the engineer deals with the incident.

Practical Setup: Four Steps to Reliable Alert Routing

Step 1: Define Your Severity Levels

Start with three to five severity levels. More than that leads to decision paralysis, while fewer don’t provide enough detail.

A typical model includes:

- SEV1 — Critical: Customer-facing outage, risk of data loss, security breach. Immediate response required.

- SEV2 — High: Degraded service, significant performance impact. Response within 15–30 minutes.

- SEV3 — Medium: Non-critical component failure, elevated error rates. Response during business hours.

- SEV4 — Low: Warnings, capacity planning signals. Review in the next working session.

Document these levels and ensure every engineer on your team can classify an alert without needing a reference. Severity levels are effective only when shared among the team.

Step 2: Map Channels to Severity

Once you’ve defined severity levels, assign notification channels:

| Severity | Primary Channel | Secondary Channel |

|---|---|---|

| SEV1 | Phone call + SMS | Slack + Email |

| SEV2 | SMS + Slack | |

| SEV3 | Slack | Email digest |

| SEV4 | Email digest | Dashboard |

This mapping ensures that a SEV1 incident prompts immediate action, while a SEV4 observation waits in an inbox. Adjust based on your team’s preferences, but avoid escalating every issue. If everything is urgent, nothing truly is.

Step 3: Build Your Escalation Policies

An alert escalation policy outlines what happens when there’s no response. Every critical alert path should include:

- Primary responder: The current on-call engineer.

- First escalation (after ~10 min): Secondary on-call or team lead.

- Second escalation (after ~25 min): Engineering manager or incident commander.

- Final escalation (after ~45 min): Department head or VP of Engineering.

Timing will vary based on your SLAs and team size. A small startup has different escalation needs than a larger platform team. Ensure no alert goes unnoticed. If the primary responder doesn’t act, someone else must.

For webhook setups, ensure your routing layer tracks acknowledgment status. When the webhook fires, the notification goes out, and the system checks for acknowledgment. If there’s no acknowledgment within the set time, escalate.

Step 4: Test Regularly

This is where many teams fall short. They create a solid routing configuration, verify it once, and then ignore it until an incident reveals that the on-call schedule has changed, the escalation path points to someone who’s left, and the SMS gateway credentials expired months ago.

Schedule monthly routing tests. Run a synthetic SEV1 alert through your entire process. Confirm that the right person receives the correct notification on the appropriate channel within the expected timeframe. Treat your alert routing like your backups: if it’s untested, it’s broken.

Cost Considerations: Don’t Overpay for Simplicity

Many incident management platforms charge per seat, meaning your costs increase with team size. For a small team, that might be manageable, but for a larger team with multiple services, expenses can quickly add up.

Before committing to a platform, do the math:

- Per-seat pricing: Multiply the per-user cost by every engineer who might be on-call. Don’t forget managers in escalation paths and contractors covering holiday weekends. The total is often higher than expected.

- Per-notification pricing: Some platforms charge for SMS or voice delivery in addition to the subscription. At scale, a noisy SEV3 that triggers a hundred grouped notifications can lead to an unexpected bill.

- Flat-fee models: Some newer tools offer flat-rate pricing regardless of team size. For growing teams, this can be significantly more cost-effective and removes the incentive to limit on-call participants.

The best alert routing tool is one that your entire team can use effectively. If per-seat costs prevent engineers from being included in escalation paths, you may save on licensing but pay for slower incident response.

Wrapping Up

Effective alert routing is essential for every team managing production services, not just those with enterprise budgets. Define your severity levels, thoughtfully map your channels, create escalation policies with clear accountability, and regularly test the system.

The tools available are evolving to make this accessible for smaller teams. Products like PagerBolt offer straightforward multi-channel incident notifications without the complexity or high costs that have been typical.

Start simple and iterate. Make sure your alerts don’t get lost in a Slack channel at 3 AM.

This post was written by the PagerBolt team — engineers who’ve been on the wrong end of a missed page and decided to do something about it.